MAKE THE MOST OF YOUR

VACATION RENTAL

RENTAL CABIN MANAGERS

At Avada,

WE TAKE CARE OF YOUR PROPERTY & GUESTS

We handle everything relating to your short – term rental, from promoting it on the right platforms and dealing with guests to keeping it in great shape and optimizing your returns.

This means no hassle and more free time for you.

WORRY- FREE MAINTENANCE

& PROTECTION

GET TOP-NOTCH MARKETING

Get access to a team of former Airbnb talent, professional photographers, and skilled copywriters that will make your property look its best and boost your visibility on the right platforms.

NO STRESS WITH GUESTS

Regain control of your time and let us deal with your guests and answer their questions and requests. Day and night, from pre-booking to check out and follow-up, we love hosting and caring for our guests.

INCREASE YOUR REVENUE

Leverage our tech-geek rate optimization strategies to maximize your nightly fees and your occupancy. Expect a minimum of $2500 more in yearly revenues.

LOW & ALL-INCLUSIVE FEE

Pigeon Forge and Gatlinburg area. It covers marketing,

guest relations, and property management. It also

covers replacing minor items, such as air filters and

light bulbs.

TRANSPARENCY

handymen, and our in-house team works at a low hourly

rate. We agree on everything upfront with you in

absolute transparency. No hidden fees or unpleasant

surprises. Ever.

MARKET KNOW-HOW

As leading players in the Smoky Mountains, we know

what your guests expect and appreciate. We also

know what they don’t expect, so we can save you money

and time.

ENJOY AS MUCH AS YOU WANT

Stay at your vacation home without restrictions.

We won’t stop you from enjoying your own property.

HEAR OUR OWNERS

Avada’s Success Blog:

Learn. Execute. Prosper

Think Property Managers Are Overrated? Here Are 5 Ways They Can Help You And Make Your Cabin More Profitable

“Do I really need a property manager for my short-term rental?” This question is as old as the short-term rental industry itself. People wonder if they really need someone to take care of their cabins for them. The answer is… It depends. Are you a person with plenty of patience? Do you love hospitality? Are […]

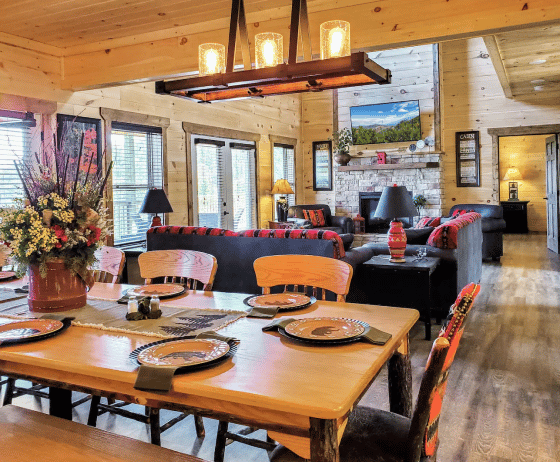

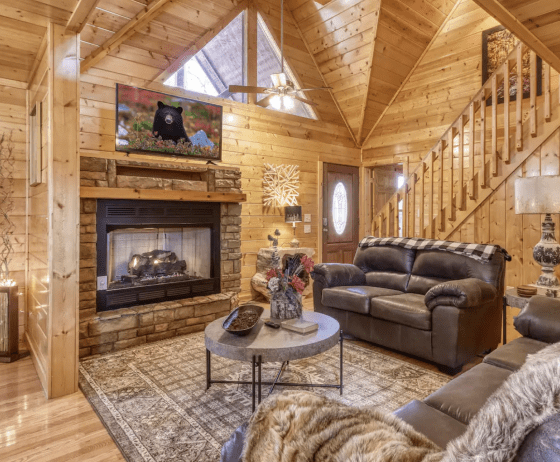

How Great Photos Increased Cabin Rental Rates by 64%

We’ve all heard that “a picture is worth a thousand words” and know it has some truth to it. As cabin property managers in the Gatlinburg / Pigeon Forge area, I’ve learned to take that old adage seriously! No matter how well-written your airbnb/vrbo listings are, photos are the first thing people look at when […]

Five Design Tips to Increase Your Nightly Rental Rates (With a Case Study)

There’s a common misconception among short term rental property owners that interior design is an unwanted expense that they can easily avoid. But think about it for a second… Suppose you’re going on a vacation with your family or taking time for a workcation away from home. Would you rather stay in an airbnb/vrbo place […]

You do your thing. Leave the rest to us.

your vacation rental, and make it more profitable